Eigenlandscape art

What happens when we run singular value decomposition (SVD)

on images? In this post I’ll show how to do SVD on images with python and

some of the interesting visual effects that result.

Eigenfaces

Eigenfaces are visualisations of the eigenvectors of the covariance matrix that you get when you stack vectors representing faces together. They come up in the field of automatic image and face recognition.

Decomposition with sklearn

Klara-Marie den Heijer and I wondered what would happen when you applied the same transform to landscape photographs.

The first test was to take a bunch of hiking photos, flatten them, and visualise the components.

import matplotlib.pyplot as plt

import numpy as np

import glob

from skimage import io, transform

from sklearn.decomposition import IncrementalPCA

from sklearn.preprocessing import MinMaxScaler

# Read images as numpy arrays

file_names = glob.glob('Pieke/*.jpg')

images = [io.imread(file_name) for file_name in file_names]

# Scale to something smaller

scaled_images = [transform.resize(image, (155, 234)) for image in images]

# At this point each image is a numpy ndarray of

# shape (155, 234, 3).

# Flatten so we have ndarrays of shape (108810,)

fimages = [image.flatten() for image in scaled_images]

# Now do the decomposition

pca = IncrementalPCA()

timages = pca.fit_transform(fimages)

# Scale each component so we can interpret

# them as pixel intensities

mms = MinMaxScaler()

ef = mms.fit_transform(pca.components_)

# Plot the sixth component

plt.imshow(ef[6].reshape((155, 234, 3)))

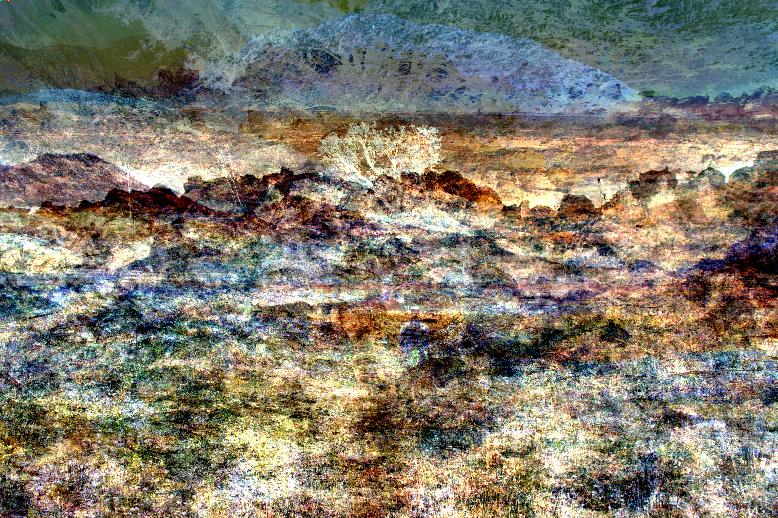

The resulting image (shown below) reminds me a lot of some of Ydi Coetsee’s work.

Here is my favourite painting by Ydi:

Real landscapes as input

Taking about 20 of Klara’s landscape photographs:

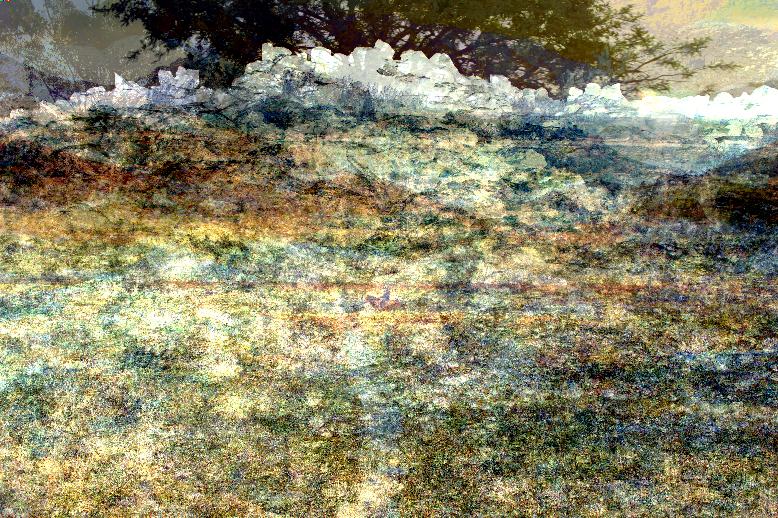

and pushing them through the algorithm gives some fascinating results:

It seems that we also inadvertently discovered a way to automatically generate kitsch watercolours:

Larger image set

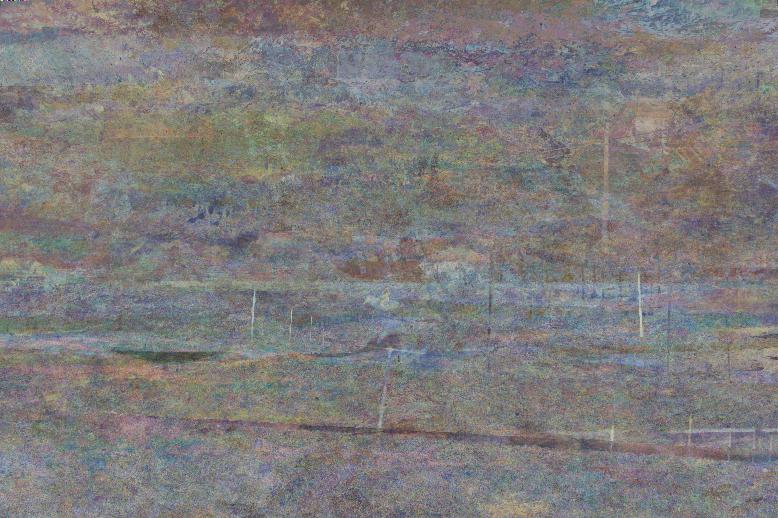

On a slightly larger set (85 images), the most interesting images are found in the first few eigenvectors (corresponding to the largest eigenvalues):

The very last component (in the sets we tried) always has a different visual quality, reminding me a lot of some impressionist paintings.

Note the horse silhouette in the center of the image above, and the recurring telephone poles in this set—some of the features are hard to dilute.

Thoughts

There are a few more things we’d want to try, like

- different ways to scale the intensities of the components,

- different (and possibly much larger) sets of images,

- passing the resulting images through the algorithm again (initially I thought you would get the same images back but after some quick experimentation this is not the case—and after repeating 100 times there is a lot of quality loss),

- doing the same with small video clips (I’ve always wanted to generate more Spongebob episodes)

Then there is the question of why such interesting images emerge. The resulting images are for me at least as interesting as the original photographs, and I have a suspicion that they will look better than a random linear combination of the original photos (must be tested though).

At this stage I’d like to think that there is some connection between what happens in the brain and the SVD—that we somehow build up a prototype (archetype?) of images that is similar to how the algorithm decomposes the images. Since the eigenvectors are the directions that the images differ most in, it could also be that these directions are interesting almost by definition.

A quick google search only found this similar investigation—maybe I’m not using the right search terms?

Klara also plans to paint some of the images as part of an ongoing study on something (ask her to explain).